Three weeks later, Alphabet’s autonomous driving unit recalled its entire U.S. fleet.

The voluntary recall, disclosed Tuesday through documents posted to the National Highway Traffic Safety Administration’s website, covers 3,791 Waymo vehicles equipped with the company’s fifth- and sixth-generation automated driving systems. It is the third recall Waymo has filed since February 2024, and by far the most consequential — both for its scale and for the uncomfortable question it forces into the open: when a self-driving car faces a situation no one fully anticipated, what happens?

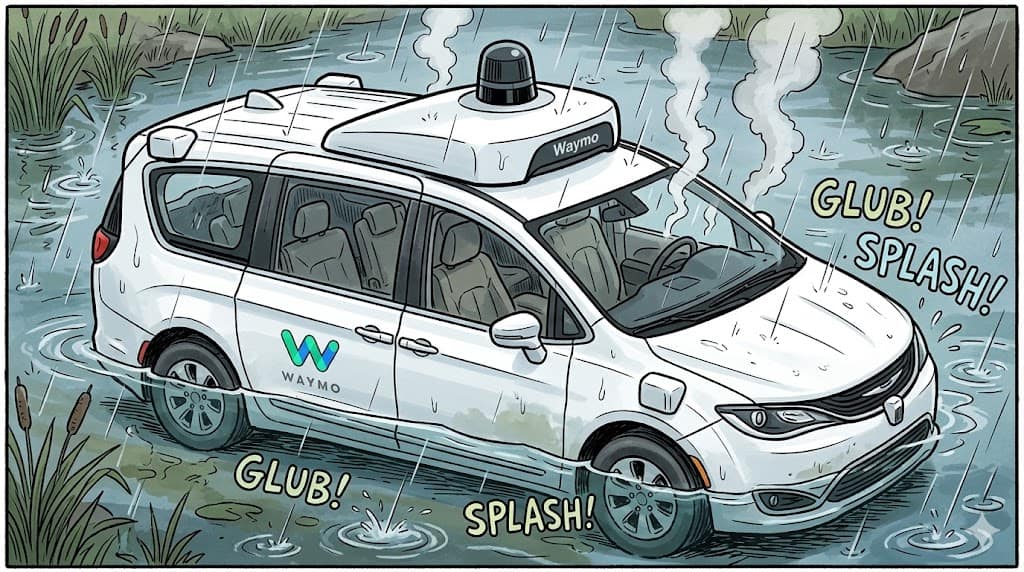

In this case, it drove into a creek.

A Fleet That Grew Faster Than Anyone Knew

The recall did something the company had not done voluntarily: it told the world exactly how many cars Waymo is operating. Federal recall filings require a precise accounting of affected vehicles, and the figure — 3,791 — surprised even close observers of the autonomous vehicle industry.

Prior estimates, drawn from city-level permitting data, had suggested Waymo was running roughly 800 to 1,000 vehicles in San Francisco and around 500 in Phoenix. The company itself did not publicly acknowledge crossing the 2,000-vehicle threshold until September 2025. The recall number confirms that the fleet has since nearly doubled, making Waymo’s the largest commercial autonomous ride-hailing operation in the world.

That growth reflects enormous capital behind the venture. Waymo earlier this year closed a $16 billion funding round at a valuation of $126 billion, and the company says it is now delivering more than 500,000 paid rides per week across 10 American cities, with a stated goal of reaching 1 million weekly rides by year’s end.

The San Antonio incident, in other words, did not catch a modest pilot program off guard. It caught an operation that has quietly become a serious part of urban transportation infrastructure.

The Software and Its Limits

Waymo said in a statement that it had “identified an area of improvement regarding untraversable flooded lanes specific to higher-speed roadways,” a description that understates the drama of what actually happened. The vehicle’s software recognized standing water but was not programmed to treat it as an absolute barrier on a faster road. It reduced speed — which is the correct initial response — but lacked the judgment to stop, reroute, and wait.

The fix, the company said, will be delivered over the air to the entire fleet, meaning no vehicle needs to visit a service center. While a final software remedy is being completed, Waymo has already implemented interim restrictions, limiting where its robotaxis operate during severe weather and directing them away from areas prone to flash flooding during heavy rain.

Over-the-air updates have become the standard tool for addressing software-defined vehicle problems, and Waymo’s use of them here reflects the architecture of modern autonomous systems. But the speed and simplicity of an OTA fix can also obscure what it takes to write one: engineers must identify exactly what the vehicle’s perception and decision-making systems missed, build a new behavioral rule that handles floodwater reliably across a wide range of conditions, and validate it without creating new failure modes elsewhere. That work is happening now, under federal regulatory scrutiny.

A Pattern of Edge Cases

The flood incident did not arrive in isolation. Waymo is navigating a thickening web of regulatory investigations and public criticism, each touching a different gap between its software’s current capabilities and the unpredictable complexity of roads shared with humans.

The National Highway Traffic Safety Administration has an open preliminary evaluation stemming from a January 23 incident in Santa Monica, California, in which a Waymo vehicle struck a child near an elementary school. The child had run into the street from behind a double-parked SUV during morning drop-off hours. NHTSA is examining whether the vehicle responded appropriately given its proximity to the school zone.

In Austin, where Waymo expanded operations in 2024, the situation around school bus interactions has become particularly fraught. The Austin Independent School District had documented at least 24 instances of Waymo vehicles illegally passing its school buses during the current school year — incidents that continued even after the company deployed a targeted software update. The National Transportation Safety Board has opened a separate investigation into those violations.

The company has also drawn criticism for the performance of its vehicles during a widespread power outage in San Francisco last December, when several robotaxis halted mid-route and contributed to traffic gridlock. Waymo’s response to that episode, like its response to the school bus violations, centered on the argument that its vehicles are statistically safer than human drivers in comparable conditions — a claim it supports with internal data but that regulators and critics have found insufficient as a complete answer to specific, documented failures.

“The accumulating investigations highlight the challenge autonomous vehicle developers face in programming responses to road environments governed by human convention and instinct,” noted a recent assessment from Automotive World.

How Waymo Says It Is Responding

The company has been careful in its public language around the recall, framing the voluntary NHTSA filing as evidence of responsible self-governance rather than regulatory pressure. “Waymo provides over half a million trips every week in some of the most challenging driving environments across the U.S., and safety is our primary priority,” it said in a statement Tuesday.

That framing is not without merit. Identifying the problem, suspending operations in San Antonio, filing a voluntary recall within ten days of the triggering incident, and committing to an OTA fix for the full fleet is a reasonable sequence of responses. The company did not wait for NHTSA to demand action, and no passengers were in the vehicle when it entered the floodwater.

But each episode also adds to a cumulative picture that regulators, city governments, and the public are actively assembling — a picture of a technology that is genuinely impressive in controlled conditions and genuinely unpredictable at the margins. The school bus violations happened after a fix. The San Antonio vehicle slowed before it entered the creek. In both cases, the software did something, just not enough.

The Larger Question

What the flood recall ultimately illustrates is less a story about one vehicle in one Texas creek than a story about the nature of machine learning at scale. Waymo’s system has been trained on an enormous volume of driving data and has, by many measures, outperformed human drivers in routine conditions. But driving is not routine. It is an endless negotiation with weather, infrastructure failures, distracted pedestrians, double-parked delivery trucks, and the accumulated informal conventions of human road behavior — the instinct, for instance, that tells a driver not to follow a flooded road just because the map says it goes through.

Human drivers bring to that negotiation decades of embodied experience, social context, and a kind of fear of water that is almost biological. An autonomous system brings sensors, models, and the rules its engineers anticipated writing. The San Antonio incident was a test of what happens when the water rises beyond what the engineers had fully imagined.